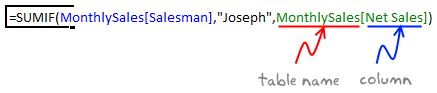

With Excel 2007, Microsoft has introduced a powerful and useful feature called as Tables. One of the advantages of Tables is that you can write legible formulas by using structural references. That means, you can write easy to understand formulas like this,

But, there is a problem. When you write these formula and drag the formula cell sideways to fill remaining cells, Excel changes table column references and thus makes your formulas almost useless.

Well, there is a simple workaround for this problem.

Use copy & paste.

Instead of dragging the cell to fill formulas, you can use copy & paste to fill the formulas. In this case, Excel will preserve all table references while changing the cell references accordingly. See this demo to understand:

Share your Table Tips & Tricks

Ever since I discovered the tables feature in Excel 2007, I have been using them to save time and simplify my work with data. Tables have several useful features that make life simple for analysts and data junkies everywhere.

What about you? Do you use Excel Tables? What are your top table tricks? Please share using comments.

More on Excel Tables

If you are using Excel 2007 or above, I encourage you to learn Excel Tables. They will make your life simpler. Go thru below articles to learn more,

One Response to “Easily Convert JSON to Excel – Step by Step Tutorial”

Great guide! You mentioned that "Power Query in Excel offers a quick, easy and straightforward way to convert JSON to Excel." This is very true for simple structures. For those dealing with deeply nested JSON that Power Query struggles with, I've found a few tips helpful: 1) Flatten the JSON structure before importing if possible, 2) Use Python for more complex transformations as you suggested.