A very happy new year to you. May 2016 be your most awesome year yet.

A very happy new year to you. May 2016 be your most awesome year yet.

This year too I plan to share tutorials, tips, podcasts & videos to make you awesome. I hope to focus on

- Excel 2016 – exploring new features

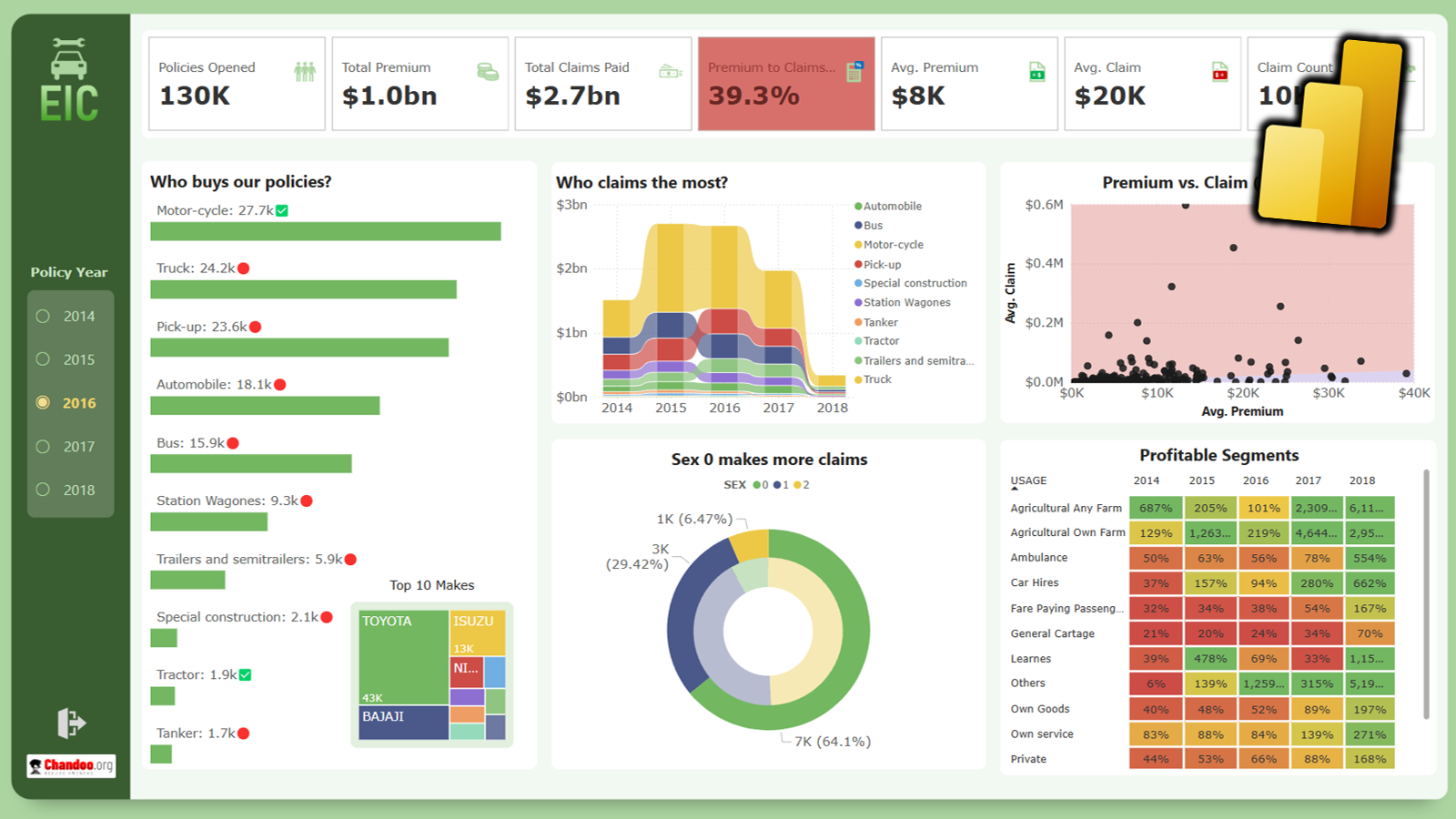

- Power BI – What is it, how does it make you awesome.

- Write a sequel to The VLOOKUP Book.

- Launch a new online course on Power BI & New Excel.

- Run 2 more batches of 50 ways to analyze data program in Feb & July 2016.

- Run another dashboard contest on Chandoo.org

- Write about awesome ways to work with data – formulas, charts, tables, pivots etc.

- Talk about many advanced and work specific Excel scenarios in the podcast

But wait, what do you want to learn more…?

While these are my plans, I want to make sure Chandoo.org helps you the best. So please take a minute and answer this one question survey.

What is the one topic / area of Excel you want to master in 2016?

Use below form to answer the question. Alternatively, you may post your answer in the comment section.

Thank you.